The training sets of labeled images that are ubiquitous in contemporary computer vision and AI are built on a foundation of unsubstantiated and unstable epistemological and metaphysical assumptions about the nature of images, labels, categorization, and representation. Furthermore, those epistemological and metaphysical assumptions hark back to historical approaches where people were visually assessed and classified as a tool of oppression and race science.

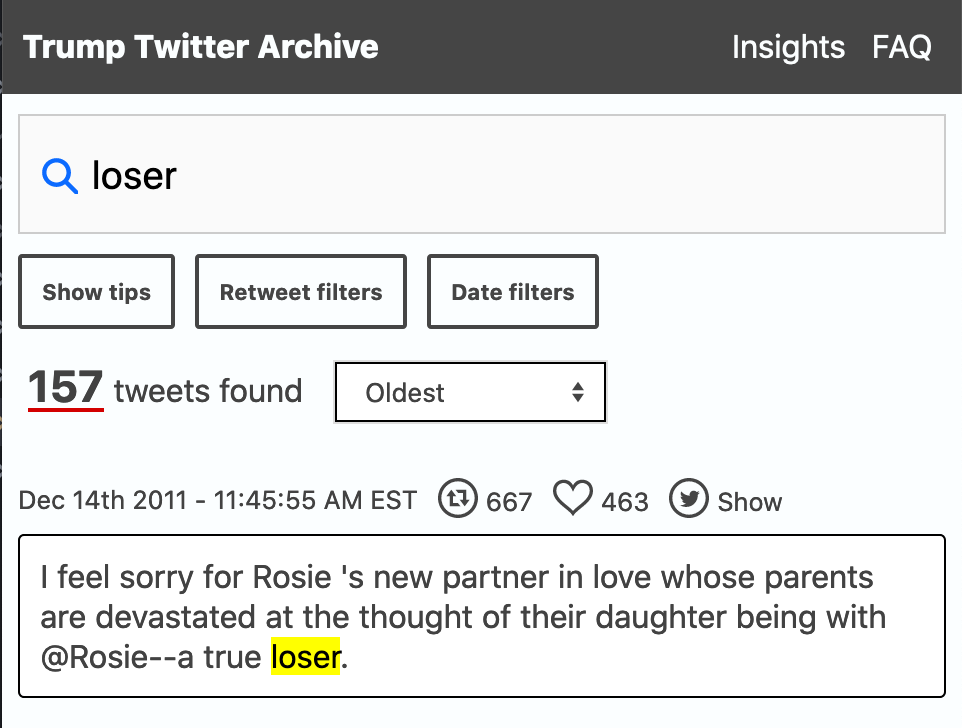

Excavating AI is an important paper by Kate Crawford and Trevor Paglen that looks at “The Politics of Image in Machine Learning Training.” They look at different ways that politics and assumptions can creep into training datasets that are (were) widely used in AI.

- There is the overall taxonomy used to annotate (label) the images

- There are the individual categories used that could be problematic or irrelevant

- There are the images themselves and how they were obtained

The training sets of labeled images that are ubiquitous in contemporary computer vision and AI are built on a foundation of unsubstantiated and unstable epistemological and metaphysical assumptions about the nature of images, labels, categorization, and representation. Furthermore, those epistemological and metaphysical assumptions hark back to historical approaches where people were visually assessed and classified as a tool of oppression and race science.

They point out how many of the image datasets used for face recognition have been trimmed or have disappeared as they got criticized, but they may still be influential as they were downloaded and are circulating in AI labs. These datasets with their assumptions have also been used to train commercial tools.

I particularly like how the authors discuss their work as an archaeology, perhaps in reference to Foucault (though they don’t mention him.)

I would argue that we need an ethics of care and repair to maintain these datasets usefully.