The Atlantic has a thoughtful article titled, AI Isn’t Coming for Everyone’s Job. The article points out that player pianos automated the playing of pianos in the early 1900s and could even play things humans didn’t have enough fingers for, but that didn’t put piano players out of work.

How could humans possibly compete? Yet today you are more likely to encounter a piano player than a player piano, despite the job being successfully automated a very long time ago. The automatons have been relegated to museums and the rare curiosity. Pianists can be found any night of the week in hotel lobbies, Italian restaurants, and concert halls.

The article goes on to talk about how live music is still appreciated even though many musicians can’t play as well as what you can get on recorded (or automated) music. People like to see, hear, and interact with other people.

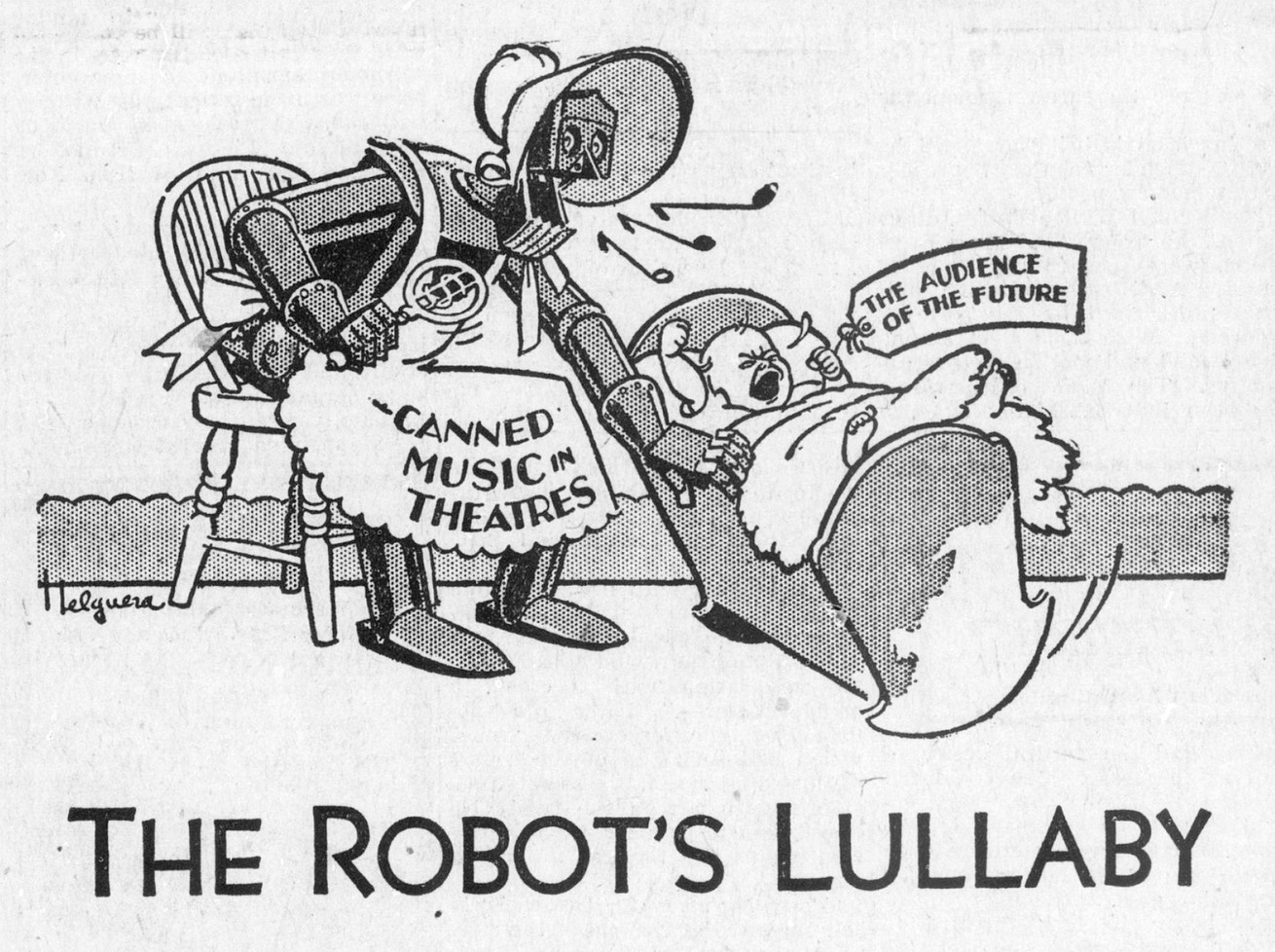

It also mentions how people fought back. Above you see an image from an ad in 1930. Earlier John Philip Sousa coined the phrase “canned music” in 1906 to mock the automated sound. (At the time the cylindrical records came in can shaped containers.) According to the Wikipedia article, he testified to Congress,

These talking machines are going to ruin the artistic development of music in this country. When I was a boy… in front of every house in the summer evenings, you would find young people together singing the songs of the day or old songs. Today you hear these infernal machines going night and day. We will not have a vocal cord left. The vocal cord will be eliminated by a process of evolution, as was the tail of man when he came from the ape.

Sounds like some of the concerns we have about AI today, but again, I suspect live music will survive.

The problem is more likely to be arts where there isn’t a live person performing or interacting with you. Does it really matter if illustrations in magazines are made by humans, AIs or hybrids as long as they catch the eye and illustrate the topic? Perhaps the visual arts will shift to live performance art or those online performances like those for YouTube by Bob Ross.