An article on Semafor points out that OpenAI has changed their list of “Core Values” on their Careers page. Previously, they listed their values as being:

Audacious, Thoughtful, Unpretentious, Pragmatic & Impact-Driven, Collaborative, and Growth-oriented

Now, the list of values has been changed to:

AGI focus, Intense and scrappy, Scale, Make something people love, Team spirit

In particular, the first value reads:

AGI focus

We are committed to building safe, beneficial AGI that will have a massive positive impact on humanity’s future.

Anything that doesn’t help with that is out of scope.

This is an unambiguous change from the value of being “Audacious”, which they had glossed with “We make big bets and are unafraid to go against established norms.” They are now committed to AGI (Artificial General Intelligence) which they define on their Charter page as “highly autonomous systems that outperform humans at most economically valuable work”.

It would appear that they are committed to developing AGI that can outperform humans at work that pays and making that beneficial. I can’t help wondering why they aren’t also open to developing AGIs that can perform work that isn’t necessarily economically valuable. For that matter, what if the work AGIs can do becomes uneconomic because it can be cheaply done by an AI?

More challenging is the tension around developing AIs that can outperform humans at work that pays. How can creating AGIs that can take our work become a value? How will they make sure this is going to benefit humanity? Is this just a value in the sense of a challenge (can we make AIs that can make money?) or is there an underlying economic vision, and what would that be? I’m reminded of the ambiguous picture Ishiguro presents in Klara and the Sun of a society where only a minority of people are competitive with AIs.

Diversity Commitment

Right above the list of core values on the Careers page, there is a strong diversity statement that reads:

The development of AI must be carried out with a knowledge of and respect for the perspectives and experiences that represent the full spectrum of humanity.

This is not in the list of values, but it is designed to stand out and open the values. One wonders if this is just an afterthought or virtue signalling. Given that it is on the Careers page, it could be a warning about what they expect of applicants. “Don’t apply unless you can talk EDI!” It isn’t a commitment to diverse hirings; it is more about what they expect potential hires to know and respect.

Now, they can develop a chatbot that can test applicant’s knowledge and respect of diversity and save themselves the trouble of diversity hiring.

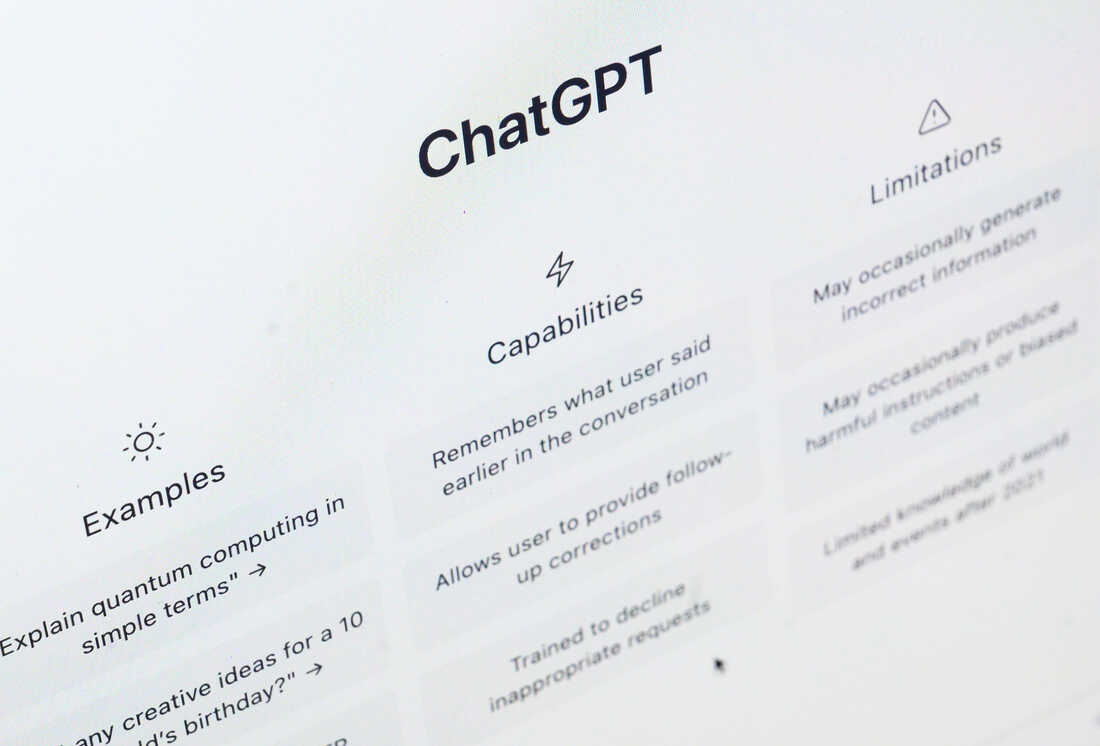

(Minor edits suggested by ChatGPT.)