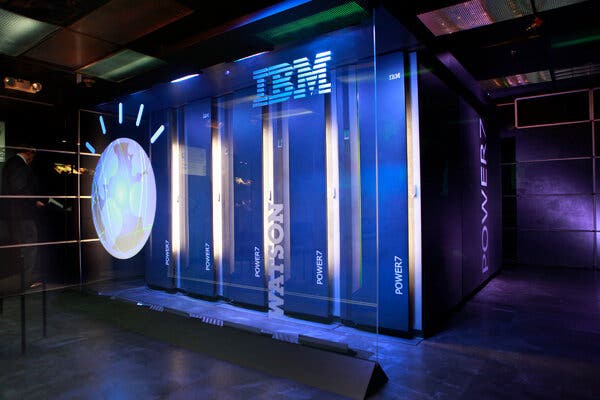

IBM’s artificial intelligence was supposed to transform industries and generate riches for the company. Neither has panned out. Now, IBM has settled on a humbler vision for Watson.

The New York Times has a story about What Ever Happened to IBM’s Watson? The story is a warning to all of us about the danger of extrapolating from intelligence behaviour in one limited domain to others. Watson got good enough at trivia question answering (or posing) to win at Jeopardy!, but that didn’t scale out.

IBM’s strategy is interesting to me. Developing an AI to win at a game like Jeopardy! was what IBM did with Deep Blue that won at chess in 1997. Winning at a game considered paradigmatically a game of intelligence is a great way to get public relations attention.

Interestingly what seems to be working with Watson is not the moon shot game playing type of service, but the automation of basic natural language processing tasks.

Having recently read Edwin Black’s IBM and the Holocaust: The Strategic Alliance Between Nazi Germany and America’s Most Powerful Corporation I must say that the choice of the name “Watson” grates. Thomas Watson was responsible for IBM’s ongoing engagement with the Nazi’s for which he got a medal from Hitler in 1937. Watson didn’t seem to care how IBM’s data processing technology was being used to manage people especially Jews. I hope the CEOs of AI companies today are more ethical.