Yesterday we went to the Truth and Reconciliation Commission event here in Edmonton, Alberta. Edmonton is the last national event before the commissioners start working on a report for 2015.

No blog entry can capture the learning and emotions of attending just a small part of the event. In the end I could only listen to some of the testimony before being overcome. I will never forget a survivor of a residential school here in St. Albert (outside of Edmonton) talking about how he and other boys would be sent out into the cold to dig graves for those who died. Imagine boys of 9 to 13 in minus 30 degree weather burying their classmates with no support from anyone.

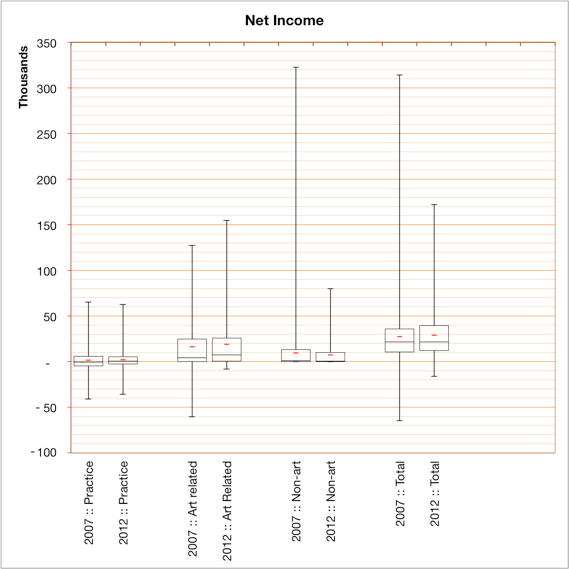

I am reminded of Hannah Arendt’s phrase “banality of evil” which she uses to describe the character of a different evil. This evil unfolded with educational intentions, something we educators should remember. This evil unfolded with the complicity of the major churches who set up and ran the schools, something those of us who belong to churches should remember. Here is a map of the residential schools run by the Anglican church to which I belong. This evil affects the survivors and their families still. Homelessness, (is) one lasting impact of Indian residential schools.

In his closing address, Commission Chair Justice Murray Sinclair, talked about how, now that we have heard truth, we need to turn to reconciliation. As one of the final speakers put it, “The Journey is On!”