From Humanist I learned about the Inaugural Lord Renwick Memorial Lecture w/ Vint Cerf : Digital Policy Alliance : Free Download, Borrow, and Streaming : Internet Archive. This lecture is available also in a text transcript here (PDF). Vint Cerf is one of the pioneers of the Internet and in this lecture he talks about the “five alligators of the Internet. They are 1) Technology, 2) Regulation, 3) Institutions, 4) the Digital Divide, and 5) Digital Preservation.

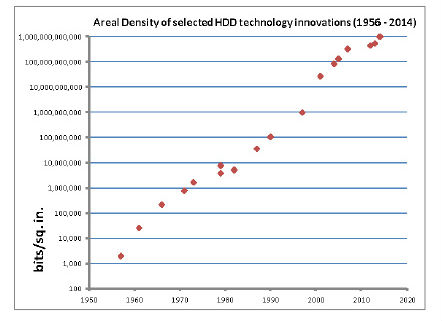

Under Technology he traces a succinct history of the internet as technology pointing out how important the ALOHAnet project was to the eventual design of the Internet. Under regulation he talked about different levels of regulation and the pros and cons of regulation. Later there are some questions about the issue of anonymity and civil discourse. All said, the talk does a great job of covering the issues facing the internet today.

Here is his answer to a question about how to put more humanity into the Internet.

The first observation I would make is that civility is a social decision that we either choose or don’t. Creating norms is very important. I think norms are not necessarily backed up by, you know, law enforcement for example, they’re considered societal values, and I fear that openness in the Internet has led to a, let’s say, a diminution, erosion, of civil discourse. I would suggest to you, however, that it’s possibly understandable in the following analog. Those of you who drive cars may, like I do, say things to the other drivers, or about the other drivers, that I would never say face to face, but there’s this windshield separating me from the other drivers, and I feel free to express myself, in ways that I would not if I were face to face. Sometimes I think the computer screen acts a little bit like the windshield of the car and allows us to behave in ways that we wouldn’t otherwise if we were right there with the target of our comments. Somehow we have to infuse back into society the value of civil discourse, and the only way to do that I think is to start very early on in school to introduce children, and their parents, and adults, to the value of civility in terms of making progress in coming together, finding common ground, finding solutions to things, as opposed to simply firing our 45 caliber Internet packets at each other. I really hope that the person asking the question has some ideas for introducing incentives for exactly that behavioral change. I will point out that seatbelts and smoking has possibly some lessons to teach, where we incorporated not only advice but we also said, by the way, if we catch you smoking in this building, there will be consequences, because we said you shouldn’t do it. So, maybe we have to have some kind of social consequence for bad behavior. (p. 13-4)

Later on he talks about license plates following the same analogy of how we behave when driving. Your car gives you some anonymity, but the license plate can be used to identify you if you go too far.