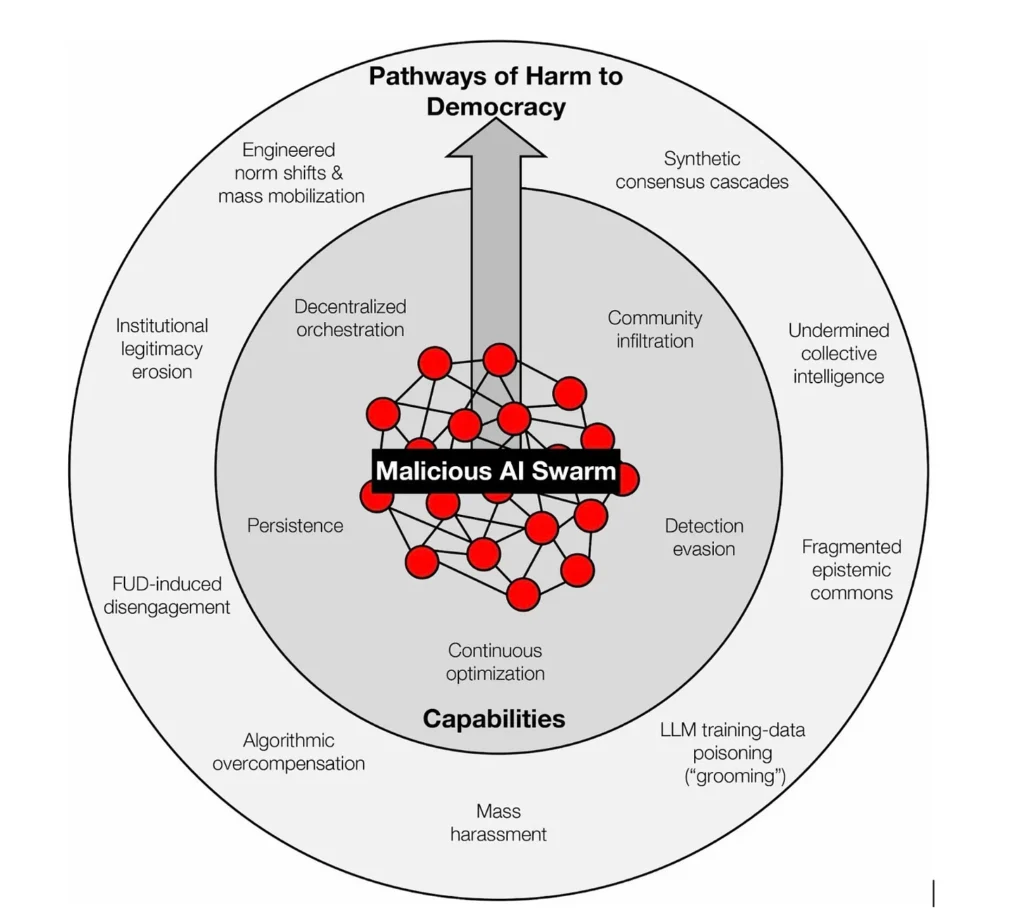

The Norwegian Consumer Council has released a punchy video about enshittification. If you go to the web site at the end you get to a page about Breaking Free. This has a link to a report on Breaking Free: Pathways to a Fair Technological Future (PDF) which argues that generative AI is the next frontier to enshittification. They point to how AI can generate large quantities of slop now sloshing around the internet.

The neologism enshittification was coined by Cory Doctorow. His web site has links to his book on it and videos of him talking about it.

The good news is, as Doctorow puts it in his book on the subject, “A new, good internet is possible. More than that, it is essential.” The final section 5 of the Norwegian report offers advice on how we can break free.

For me, it is essential that we resist the network effect, and just drop services that become unacceptably shitty. When they change the privacy settings, just drop it. It may be painful and it may feel as if your social life won’t recover, but that is what they want you to believe.