There are a number of deepfake images of the 100 car pileup on the highway between Calgary and Airdre on the 17th. You can see some here CMcalgary with discussion. These deepfakes raise a number of issues:

- How would you know it is a deepfake? Do we really have to examine images like this closely to make sure they aren’t fake?

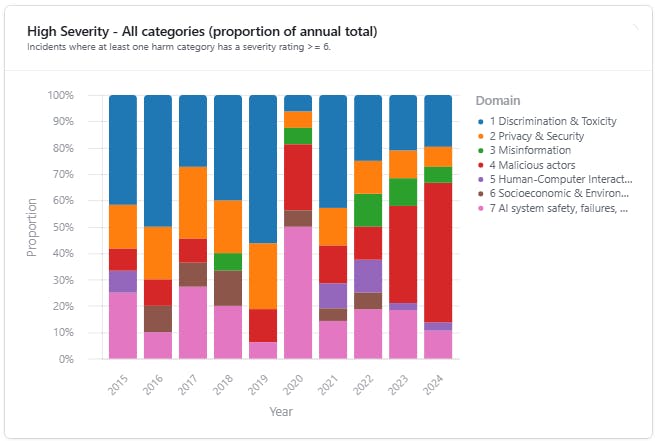

- Given the proliferation of deepfake images and videos, does anyone believe photos any more? We are in a moment of epistemic transition from generally believing photographs and videos to no longer trusting anything. We have to develop new ways of determining the truth of photographic evidence presented to us. We need to check whether the photograph makes sense; question the authority of whoever shared it; check against other sources; and check authoritative news sources.

- Liar’s dividend – given the proliferation of deepfakes, public figures can claim anything is fake news in order avoid accountability. In an environment where no one knows what is true, bullshit reigns and people don’t feel they have to believe anything. Instead of the pursuit of truth we all just follow what fits our preconceptions. A example of this is what happened in 2019 when the New Year’s message from President Ali Bongo was not believed as it looked fake leading to an attempted coup.

- It’s all about attention. We love to look at disaster images so the way to get attention is to generate and share them, even if they are generated. On some platforms you are even rewarded for attention.

- Trauma is entertaining. We love to look at the trauma of others. Again, generating images of an event like the pileup that we heard about, is a way to get the attention of those looking for images of the trauma.

- Even when people suspect the images are fake they can provide a “where’s Waldo” sort of entertainment where we comb them for evidence of the fakery.

- Deepfakes then generate more deepfakes and eventually people start responding with ironic deepfakes where a container ship is beached across the highway causing the pileup.

- Evenutally there may be legal ramifications. On the one hand people may try to use fake images for insurance claims. Insurance companies may then refuse photographs as evidence for a claim. People may treat a fake image as a form of identity theft if it portrays them or identifiable information like a license plate.