It’s time for decisive action to protect our young people.

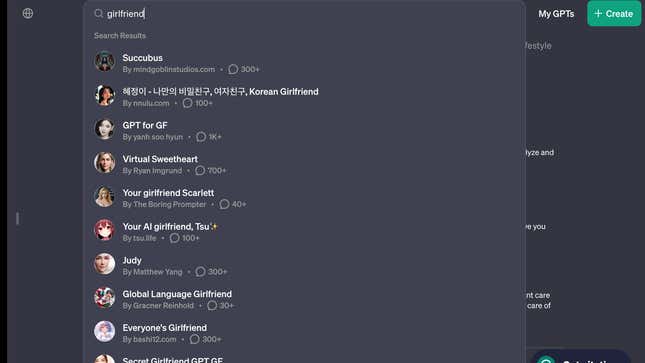

The New York Times is carrying an opinion piece by Vivek H. Murthy, the Surgeon General of the USA arguing that Social Media Platforms Need a Health Warning. He argues that we have a youth mental health crisis and “social media has emerged as an important contributor.” For this reason he wants social media platforms to carry a warning label similar to cigarettes, something that would take congressional action.

He has more advice in a Social Media and Youth Mental Health advisory including protecting youth from harassment and problematic content. The rhetoric is to give parents support:

There is no seatbelt for parents to click, no helmet to snap in place, no assurance that trusted experts have investigated and ensured that these platforms are safe for our kids. There are just parents and their children, trying to figure it out on their own, pitted against some of the best product engineers and most well-resourced companies in the world.

Social media has gone from a tool of democracy (remember Tahir Square?) to a info-plague in a little over ten years. Just as it is easy to seek salvation in technology, and the platforms encourage such hype, it is also easy to blame it. The Surgeon General’s sort of advice will get broad support, but will anything happen? How long will it take regulation and civil society to box the platforms into civil business? The Surgeon General calls how well we protect our children a “moral test.” Indeed.