The Guardian has a story by John Burn-Murdoch on how Study: less than 1% of the world’s data is analysed, over 80% is unprotected.

This Guardian article reports on a Digital Universe Study that reports that the “global data supply reached 2.8 zettabytes (ZB) in 2012” and that “just 0.5% of this is used for analysis”. The industry study emphasizes that the promise of “Big Data” is in its analysis,

First, while the portion of the digital universe holding potential analytic value is growing, only a tiny fraction of territory has been explored. IDC estimates that by 2020, as much as 33% of the digital universe will contain information that might be valuable if analyzed, compared with 25% today. This untapped value could be found in patterns in social media usage, correlations in scientific data from discrete studies, medical information intersected with sociological data, faces in security footage, and so on. However, even with a generous estimate, the amount of information in the digital universe that is “tagged” accounts for only about 3% of the digital universe in 2012, and that which is analyzed is half a percent of the digital universe. Herein is the promise of “Big Data” technology — the extraction of value from the large untapped pools of data in the digital universe. (p. 3)

I can’t help wondering if industry studies aren’t trying to stampede us to thinking that there is lots of money to be made in analytics. These studies often seem to come from the entities that benefit from investment into analytics. What if the value of Big Data turns out to be in getting people to buy into analytical tools and services (or be left behind.) Has there been any critical analysis (as opposed to anecdotal evidence) of whether analytics really do warrant the effort? A good article I came across on the need for analytical criticism is Trevor Butterworth’s Goodbye Anecdotes! The Age of Big Data Demands Real Criticsm. He starts with,

Every day, we produce 2.5 exabytes of information, the analysis of which will, supposedly, make us healthier, wiser, and above all, wealthier—although it’s all a bit fuzzy as to what, exactly, we’re supposed to do with 2.5 exabytes of data—or how we’re supposed to do whatever it is that we’re supposed to do with it, given that Big Data requires a lot more than a shiny MacBook Pro to run any kind of analysis.

Of course the Digital Universe Study is not only about the opportunities for analytics. It also points out:

- That data security is going to become more and more of a problem

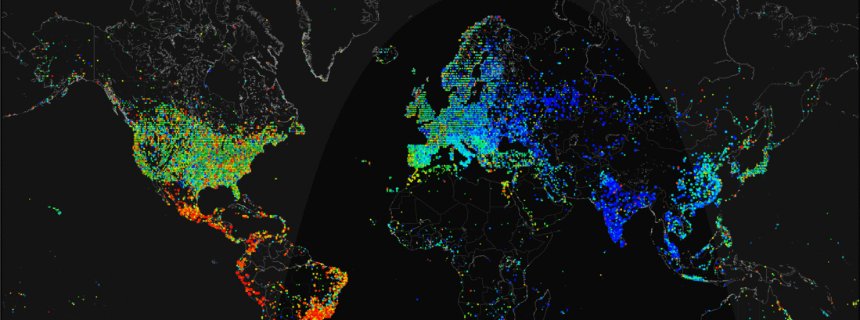

- That more and more data is coming from emerging markets

- That we could get a lot more useful analysis done if there was more metadata (tagging), especially at the source. They are calling for more intelligence in the gathering devices – the surveillance cameras, for example. They could add metadata at the point of capture like time, place, and then stuff like whether there are faces.

- That the promising types of data that could generate value start with surveillance and medical data.

Reading about Big Data I also begin to wonder what it is. Fortunately IDC (who are behind the Digital Universe Study have a definition,

Last year, Big Data became a big topic across nearly every area of IT. IDC defines Big Data technologies as a new generation of technologies and architectures, designed to economically extract value from very large volumes of a wide variety of data by enabling high-velocity capture, discovery, and/or analysis. There are three main characteristics of Big Data: the data itself, the analytics of the data, and the presentation of the results of the analytics. Then there are the products and services that can be wrapped around one or all of these Big Data elements. (p. 9)

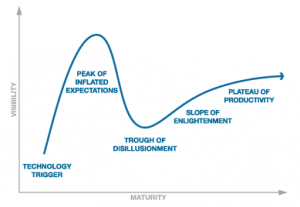

Big Data is not really about data at all. It is about technologies and services. It is about the opportunity that comes with “a big topic across nearly every area of IT.” Big Data is more like Big Buzz. Now we know what follows Web 2.0 (and it was never going to be Web 3.0.)

For a more academic and interesting perspective on Big Data I recommend (following Butterworth) Martin Hilbert’s “How much information is there in the ‘information society’?” (Significance, 9:4, 8-12, 2012.) One of the more interesting points he makes is the growing importance of text,

Despite the general percep- tion that the digital age is synonymous with the proliferation of media-rich audio and videos, we find that text and still images cap- ture a larger share of the world’s technological memories than they did before4. In the early 1990s, video represented more than 80% of the world’s information stock (mainly stored in analogue VHS cassettes) and audio almost 15% (on audio cassettes and vinyl records). By 2007, the share of video in the world’s storage devices had decreased to 60% and the share of audio to merely 5%, while text increased from less than 1% to a staggering 20% (boosted by the vast amounts of alphanumerical content on internet servers, hard disks and databases.) The multimedia age actually turns out to be an alphanumeric text age, which is good news if you want to make life easy for search engines. (p. 9)

One of the points that Hilbert makes that would support the importance of analytics is that our capacity to store data is catching up with the amount of data broadcast and communicated. In other words we are getting closer to being able to be able store most of what is broadcast and communicated. Even more dramatic is the growth in computation. In short available computation is growing faster than storage and storage faster than transmission. With excess comes experimentation and with excess computation and storage, why not experiment with what is communicated. We are, after all, all humanists who are interested primarily ourselves. The opportunity to study ourselves in real time is too tempting to give up. There may be little commercial value in the Big Reflection, but that doesn’t mean it isn’t the Big Temptation. The Delphic oracle told us to Know Thyself and now we can in a new new way. Perhaps it would be more accurate to say that the value in Big Data is in our narcissism. The services that will do well are those that feed our Big Desire to know more and more (recently) ourselves both individually and collectively. Privacy will be trumped by the desire for analytic celebrity where you become you own spectacle.

This could be good news for the humanities. I’m tempted to announce that this will be the century of the BIG BIG HUMAN. With Big Reflection we will turn on ourselves and consume more and more about ourselves. The humanities could claim that we are the disciplines that reflect on the human and analytics are just another practice for doing so, but to do so we might have to look at what is written in us or start writing in DNA.

In 2007, the DNA in the 60 trillion cells of one single human body would have stored more information than all of our technological devices together. (Hilbert, p. 11)