Whatever happened to The Last One software? The Last One (TLO) was a “program generator” that was supposed to take input from a user who wasn’t a programmer and be able to generate a BASIC program.

TLO was developed by a company called D.J. “AI” Systems Ltd. that was set up by David James who became interested in artificial intelligence when he bought a computer for his business, and apparently got so distracted that he was bankrupted by that interest (and lost his computers). It was funded by an equally colourful character, Scotty Bambury who made his money as a tire dealer in Somerset. (See here and here.)

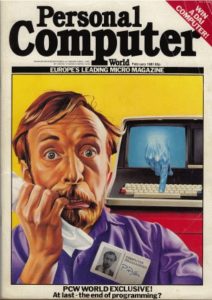

Personal Computer magazine cover from here

The name (The Last One) refers to the expectation that this would be the last software you would need to buy. As the cover image above shows, they were imagining programmers being put out of work by an AI that could reprogram itself. TLO would be the last software you had to buy and possibly the first AI capable of recursively improving itself. DJ AI could have been spinning up the seed AI that could lead to the singularity!

Here is some of the text from an ad for TLO. The text ran under the spacey headline at the top of this post.

The first program you should buy. …

THE LAST ONE … The program that writes programs!

Now, for the first time, your computer is truly ‘personal’. Now, simply and easily, you can create software the way you want it. …

Yet another sense of “personal” in “personal computer” – a computer where all your software (except, of course, TLO) is personally developed. Imagine a computer that you trained to do what you needed. This was the situation with early mainframes – programmers had to develop the applications individually for each system, they just didn’t have TLO.