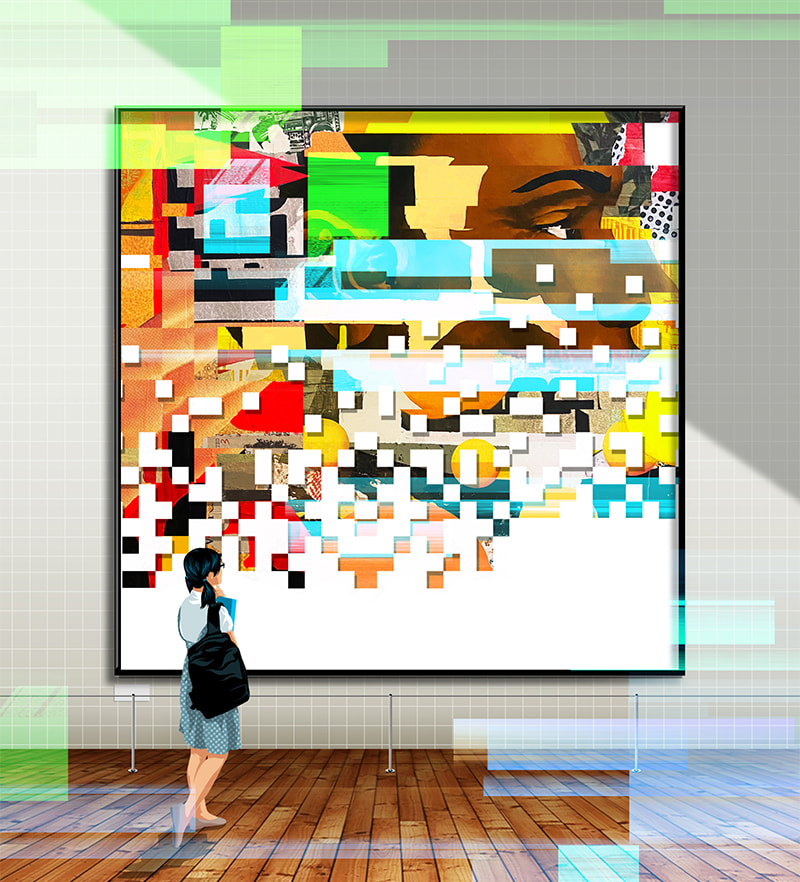

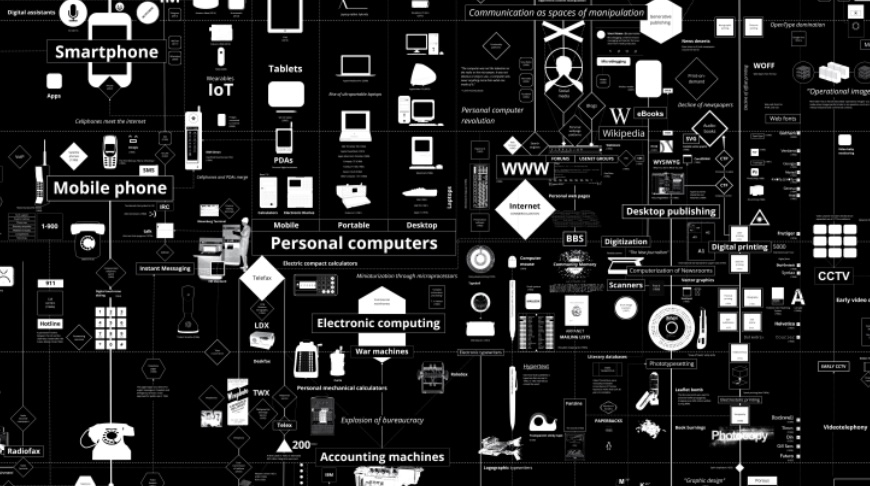

The creative team of Kate Crawford and Vladan Joler who brought us the Anatomy of an AI System have created a much more ambitious long wall sized infographic called Calculating Empires: https://calculatingempires.net.

I saw this at the Jeau de Paume exhibit on The World Through AI. I feel it is the sort of thing I would like a large poster of so I could carefully read it, but … no luck … no posters.

Anyway, it is a fascinating map of communications technology.